ChatGPT surpassed 400 million weekly active users in early 2025. A growing portion of those queries are product research: “what’s the best tool for X,” “compare A vs B,” “what do people use for Y.” Most marketing teams have no idea whether their brand appears in those answers.

The problem isn’t awareness — it’s that the standard analytics stack wasn’t built for this. Google Analytics shows a trickle of referral traffic from chatgpt.com. That undercounts significantly. Many ChatGPT users copy an answer and visit a URL directly, leaving no referral trace. Others act on a recommendation days later. The channel is larger than your dashboards suggest, and measuring it requires a different approach.

This guide covers four methods for tracking your ChatGPT visibility, what each one tells you, and where each falls short.

Why you can’t rely on referral traffic alone

ChatGPT referral traffic in Google Analytics is real but incomplete. When ChatGPT cites a source inline and a user clicks the link, that shows up as a referral from chatgpt.com. But ChatGPT often recommends brands without linking to them. It names a product, describes it, and moves on. The user searches for the brand directly or navigates there later — and that conversion looks like direct or organic traffic.

The referral traffic you do see is the floor, not the ceiling. Use it as a directional signal, not the primary metric.

Method 1: Dedicated AEO tracking tools

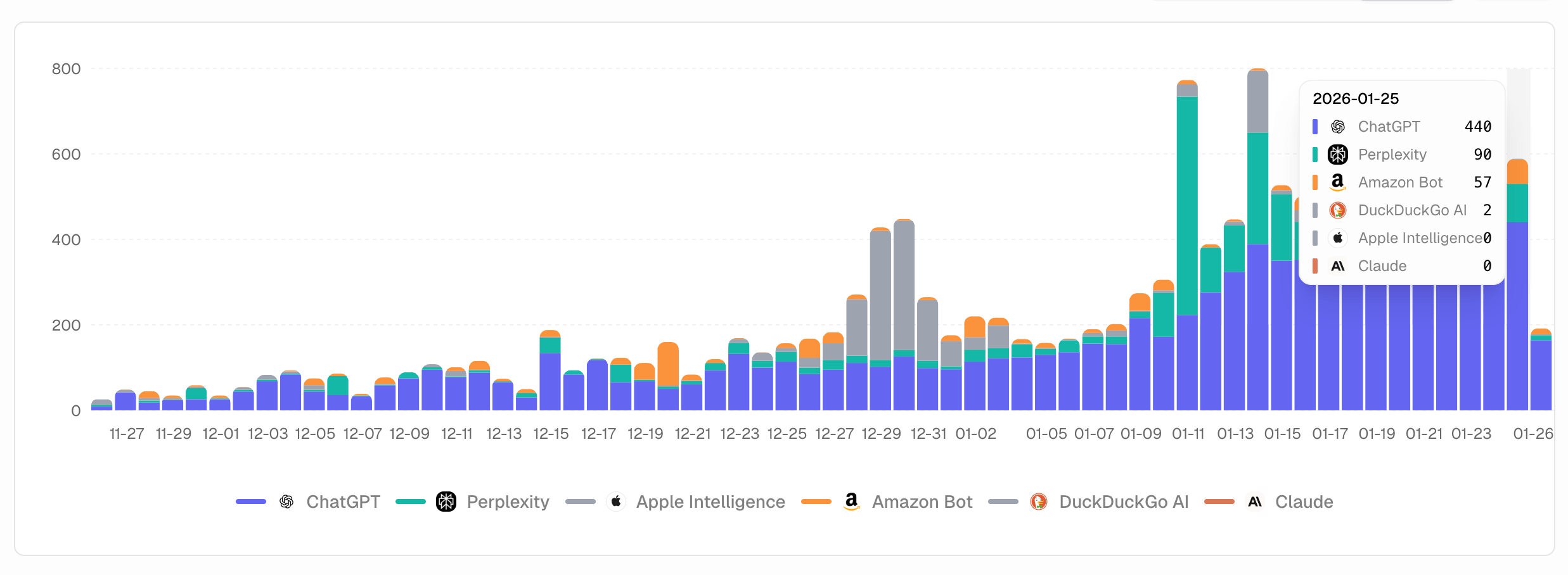

This is the most systematic approach. AEO tools work by running a set of prompts against ChatGPT on a scheduled basis — daily or weekly — and recording whether your brand appears in the responses, in what context, and alongside which competitors.

The core metrics they surface:

- Mention rate — the percentage of relevant prompts in which your brand appears

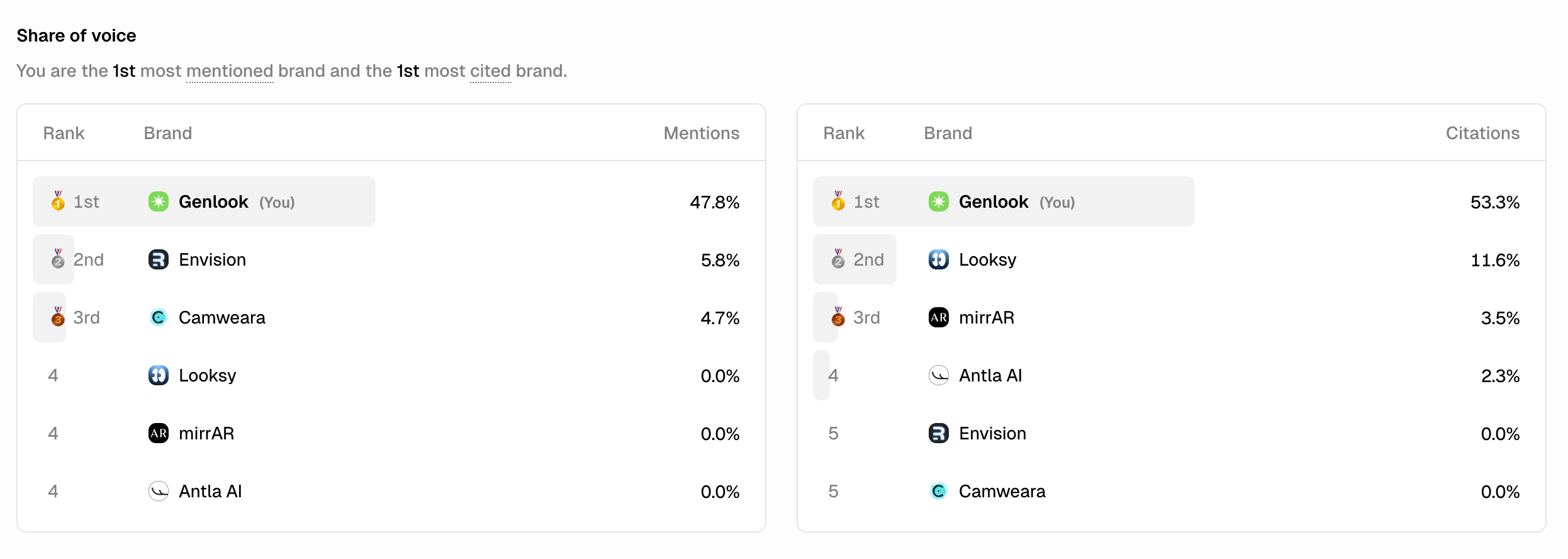

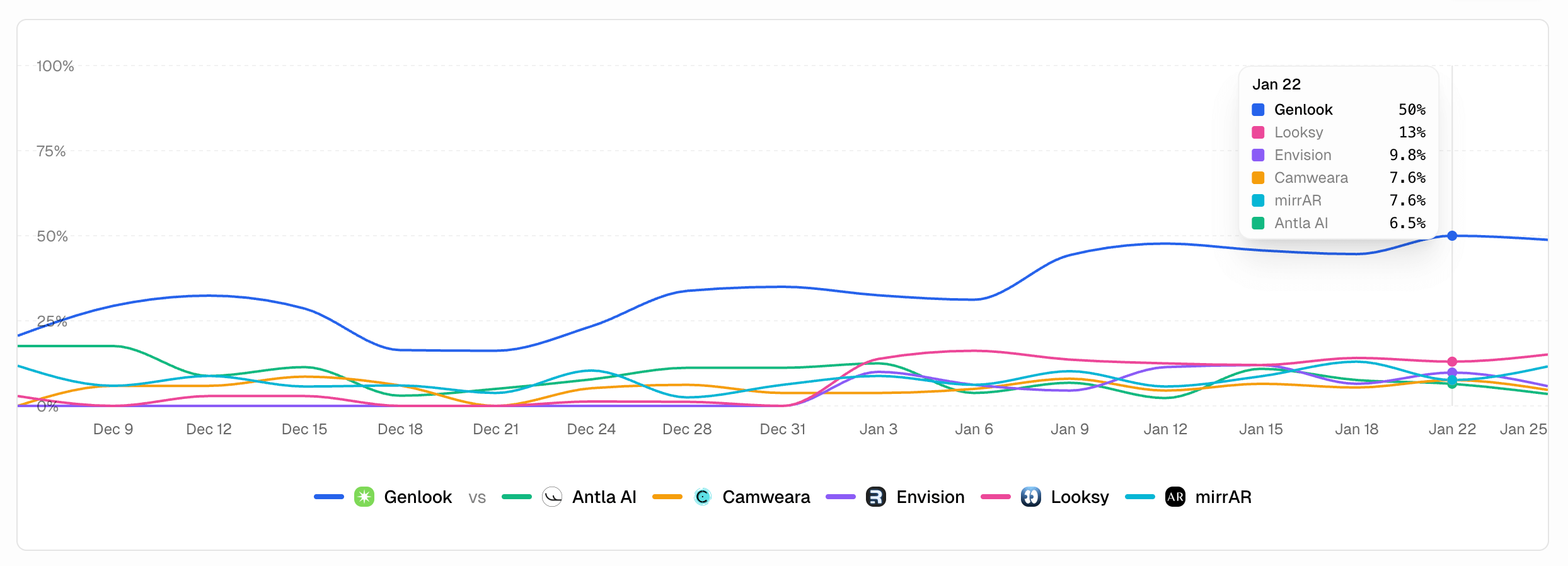

- Share of voice — your mentions relative to competitors across the same prompt set

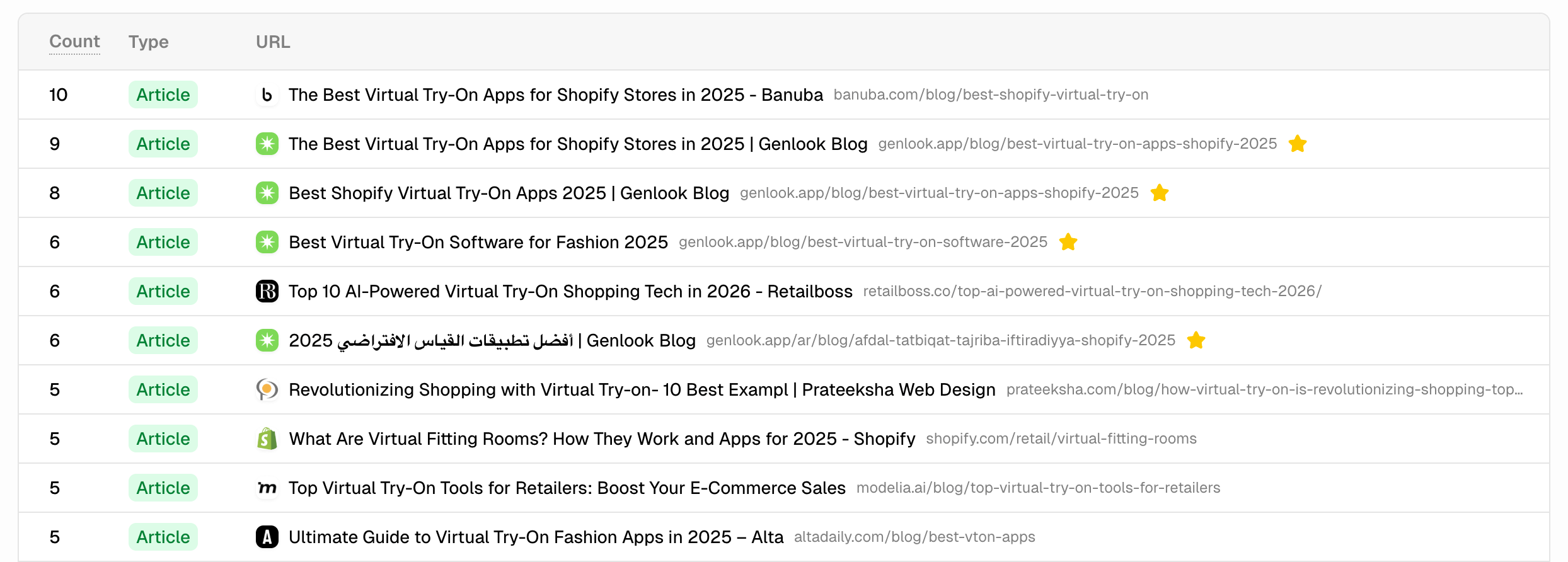

- Cited sources — which pages ChatGPT pulls from when discussing your category

- Sentiment — whether mentions are positive, neutral, or negative

The cited sources metric is often the most actionable. Teams regularly discover they’re mentioned in 40–50% of prompts for their category, but the source ChatGPT cites is a competitor’s comparison page, a third-party review site, or a Reddit thread — not their own content. That’s the gap to close.

What to look for in a tool:

- Prompt-based tracking (not keyword-based — ChatGPT doesn’t work like keyword search)

- Daily or near-daily refresh — ChatGPT’s responses evolve as its training data updates

- Competitor benchmarking in the same prompt runs

- Source-level citation data, not just brand mention counts

Tools worth evaluating: Airefs ($24/mo, includes Reddit monitoring), Peec AI ($89/mo, UI scraping for accuracy), Profound ($99/mo Starter, enterprise-grade), Promptwatch ($99/mo, 10 AI platforms). See the full comparison of ChatGPT rank tracking tools.

Method 2: Manual prompt testing

Before committing to a paid tool, manual testing tells you quickly whether you have a visibility problem worth solving.

Open ChatGPT and run 10–15 prompts that your target customers would plausibly ask:

- “What’s the best [your category] tool for [use case]?”

- “Compare [your brand] and [competitor]”

- “What do people recommend for [problem you solve]?”

- “What tools does [your typical customer] use for X?”

Record whether your brand appears, in what position, and which sources ChatGPT cites in its footnotes.

The limits of manual testing: ChatGPT’s responses are non-deterministic. The same prompt returns different answers on different runs. A single test tells you what ChatGPT said once, not what it typically says. For reliable data, you’d need to run each prompt 5–10 times and track across weeks — which is what AEO tools automate.

Manual testing is useful for a quick diagnostic. It’s not a tracking method.

Method 3: Signup source surveys

This is the highest-signal method and the most underused.

Ask every new signup how they found you. A simple free-text or dropdown field during onboarding — “How did you hear about us?” — consistently surfaces ChatGPT and other AI platforms as acquisition sources at rates that automated tools undercount.

When someone types “I asked ChatGPT which tool to use and it recommended you,” that’s a direct conversion you can attribute. No estimation, no sampling, no proxy metrics. It’s also the kind of evidence that builds internal confidence in AEO as a channel — harder to dismiss than mention rate percentages.

Implementation notes:

- Free-text outperforms dropdown for this use case. Dropdowns require you to predict the options; AI referrals weren’t a common dropdown choice 18 months ago.

- Ask at signup, not later. Response rates drop significantly after the session ends.

- Review responses weekly. The pattern shows up quickly once you’re looking for it.

This method doesn’t scale into a dashboard, but it provides ground truth that complements the quantitative data from AEO tools.

Method 4: AI crawler logs

ChatGPT is powered by OpenAI’s models, which are trained on web content crawled by GPTBot. Monitoring when GPTBot visits your site is an indirect signal: pages that get crawled more frequently are more likely to be included in training data and cited in responses.

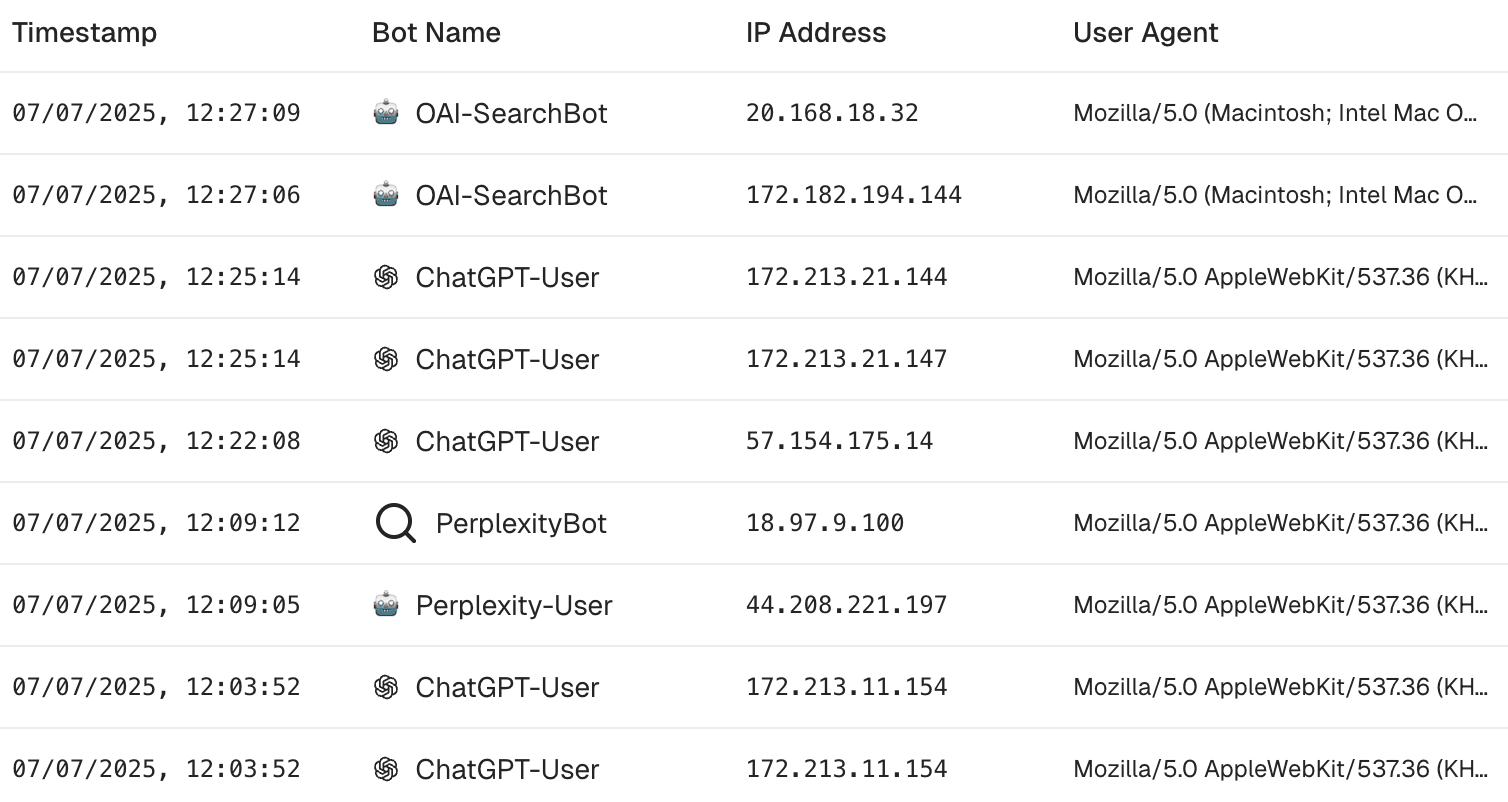

To check crawler activity manually:

- Filter your server logs or CDN logs for

GPTBotin the user agent string - Note which pages are being crawled, at what frequency, and whether crawl volume correlates with your mention rate data from AEO tools

AEO tools like Airefs surface this data directly in the dashboard — crawler impressions by bot (GPTBot, PerplexityBot, ClaudeBot, and others), broken down by page and over time. That removes the need to dig through raw server logs and makes it easy to correlate crawler activity with mention rate trends.

You can also check your robots.txt to confirm you’re not accidentally blocking GPTBot — a surprisingly common issue when teams apply catch-all rules that block unfamiliar bots.

What this tells you: Crawler activity is a leading indicator. If GPTBot is crawling a page regularly, that page has a higher chance of being indexed and cited. If key pages aren’t being crawled, that’s a technical gap to fix — not a content gap.

What it doesn’t tell you: Crawler visits don’t confirm your content is being cited. A page can be crawled without influencing responses meaningfully.

What to measure and how often

Once you have tracking in place, focus on four metrics:

1. Mention rate by prompt category Which question types include your brand, and which don’t? A brand mentioned in 60% of “best tool for X” prompts but 5% of “compare X and Y” prompts has a different problem than one with uniform low visibility.

2. Competitor share of voice Your mention rate in isolation means little. A 30% mention rate is strong if competitors average 15%; it’s weak if the category leader gets cited on every run. Track relative position, not absolute numbers.

3. Cited source breakdown Which pages does ChatGPT pull from when mentioning your brand? Your own site, third-party reviews, comparison articles, Reddit threads? The source tells you where to invest — whether that’s updating your own content, getting coverage on external sites, or participating in relevant communities.

4. Self-reported attribution from signups Run a monthly tally of how many new signups mention ChatGPT or AI assistants as their discovery channel. Watch the trend. It will go up faster than your AEO tool metrics suggest.

Review these monthly. ChatGPT’s responses don’t shift overnight — weekly or daily variance is noise. Monthly trends are the signal.

Getting more visibility once you’re tracking it

Tracking tells you where you stand. Improving your ChatGPT visibility is a separate discipline — it involves the content ChatGPT cites, the communities where your brand gets discussed, and the sources that feed into AI training data. The tracking foundation has to come first, or you’re optimizing blind.

The short version: ChatGPT cites content that’s indexed by Google and appears on credible, frequently referenced sources. That includes your own site, but also third-party articles, review sites, and community discussions. If your brand isn’t in those places, it won’t be in ChatGPT’s answers — regardless of how good your product is.

Frequently asked questions

How do I know if ChatGPT is sending me traffic?

Check your analytics for referral sessions from chatgpt.com. You’ll likely see some — but treat it as a floor. ChatGPT often recommends brands without linking, so traffic from users who acted on a recommendation and navigated directly won’t show up as a referral. Signup source surveys fill that gap.

Do I need a paid tool to track ChatGPT visibility?

Manual prompt testing is free and gives you a quick directional read. For ongoing tracking — trend data, competitor benchmarking, cited source analysis — a paid AEO tool is necessary. Manual testing doesn’t scale to the sample size needed for reliable data. Most tools offer 7–14 day free trials.

How often does ChatGPT update what it says about my brand?

ChatGPT’s model weights update periodically, not continuously. The live web browsing feature (when enabled) can surface more recent content, but the base model’s “knowledge” of your brand changes on a training cycle timescale — months, not days. That said, the sources ChatGPT cites in real-time responses are pulled from current web content, so updating your own pages and earning new coverage has near-term impact on citations even if it doesn’t immediately change the model’s baseline opinion of your brand.

What’s the difference between being mentioned and being cited?

A mention is when ChatGPT includes your brand name in an answer. A citation is when ChatGPT links to a specific source. You can be mentioned without being cited — ChatGPT may name your product from training data without referencing any specific page. Citations indicate ChatGPT is actively pulling from a live source. Both matter, but citations are stronger signals of content relevance and trust.

Can competitors affect my ChatGPT visibility?

Yes, indirectly. ChatGPT responses typically include a shortlist of brands — usually 3–5 in a comparison query. If competitors have stronger source coverage, more community mentions, and more frequently cited pages, they occupy more of that shortlist. Your visibility is partly a function of how well your category competitors are covered. That’s why share of voice is a more useful metric than raw mention rate.

Does ChatGPT treat all industries the same way?

No. ChatGPT tends to be more opinionated in categories with strong online communities, established review ecosystems, and frequently updated comparison content — SaaS, consumer electronics, financial products. In niche B2B categories with less online discussion, responses are often more hedged and fewer brands are consistently mentioned. The less-discussed your category, the more a single well-placed piece of content or community presence can move your mention rate.

Want to see your current ChatGPT visibility? Try Airefs free for 7 days — it runs prompts, tracks your mention rate, shows which sources ChatGPT cites in your category, and monitors Reddit for the conversations that feed AI recommendations.

Related reading: