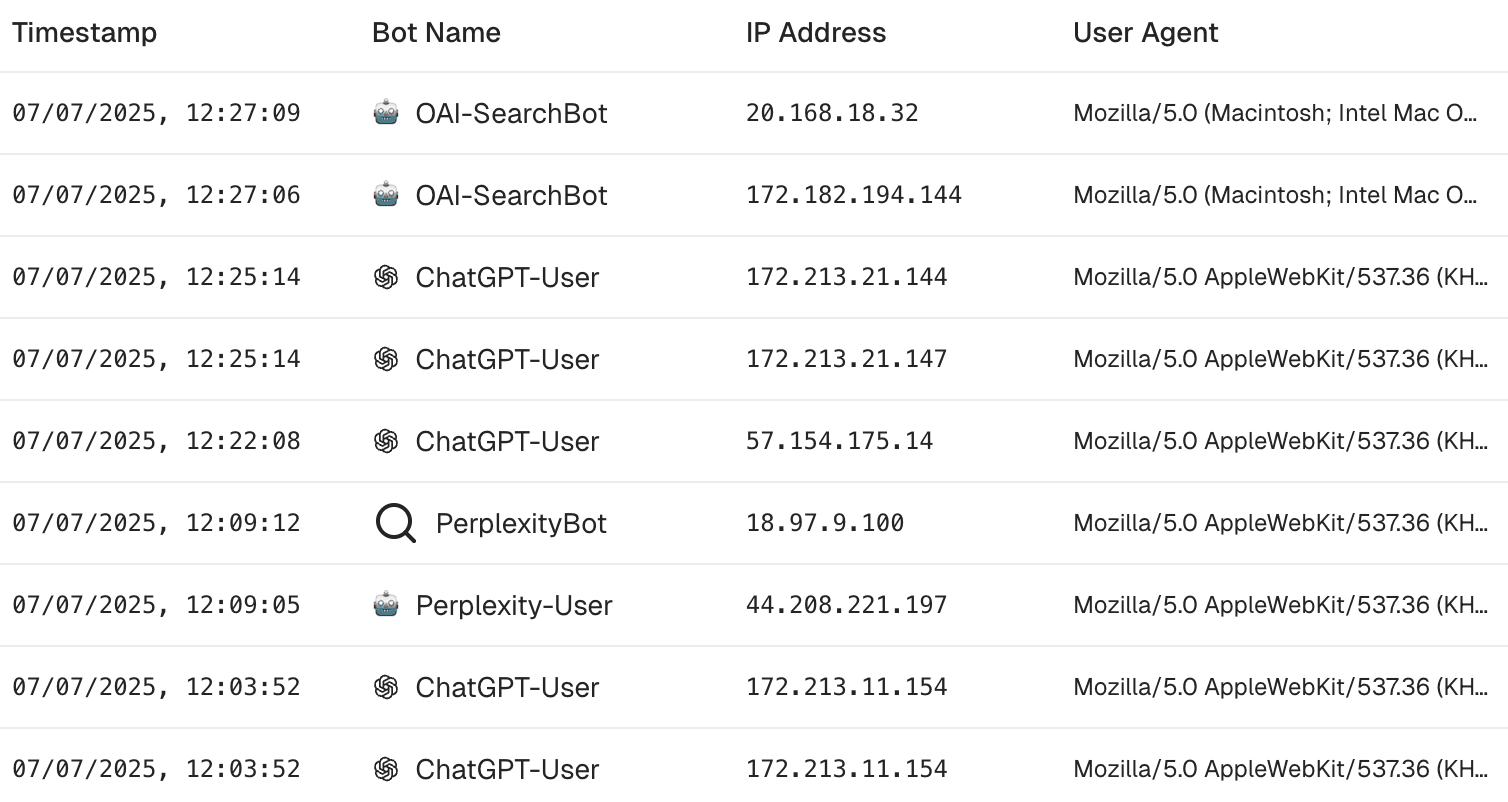

Your website gets more than just human visitors these days. If you check your server logs, you'll see strange bot names crawling your pages. These aren't normal search bots—they're AI bots, and there are a lot of them.

Some collect content to train AI models. Others gather data to answer search questions in real time. Either way, they read your content - it's up to you to decide if it's a good thing or not.

OpenAI

ChatGPT-User

A user agent to browse websites and fetch information when a user asks for something that requires real-time web data in ChatGPT.

More info: https://platform.openai.com/docs/bots

OAI-SearchBot

A user agent to browse websites and retrieve real-time information when users select "live search" in ChatGPT to get up-to-date web content.

More info: https://platform.openai.com/docs/bots

GPT-bot

A crawler to browse websites to collect data which is then used to improve the training of its AI models.

More info: https://platform.openai.com/docs/bots

Operator

An AI agent developed by OpenAI that could autonomously perform tasks through web browser interactions. It was launched in January 2025 and deprecated in August 2025, with its capabilities integrated into ChatGPT agent mode.

More info: https://openai.com/index/introducing-operator/

Anthropic

ClaudeBot

A crawler to browse public websites to gather content for training its AI language models.

Claude-User

A user agent to visit websites when Claude users ask questions that requires real-time information.

Claude-SearchBot

A crawler to browse the web to enhance the quality of search results for users. It's unclear how it is used against Claude-User

anthropic-ai

An AI agent possibly used by Anthropic to download training data for its large language models that power AI products like Claude. The exact purpose and scope of this agent remain undocumented.

Claude-Web

A web crawler used by Anthropic to gather web content for Claude-related services. This agent enables Claude to reference and discuss web content in conversations.

Amazon

AmazonBot

A crawler used by Amazon to crawl and index web content. The data it gathers enhances services like Alexa, improving search results and the accuracy of spoken responses.

More info: https://developer.amazon.com/amazonbot

Apple

Applebot

A crawler that indexes web content for features like Siri, Spotlight, and Safari search. It also collects data to help train Apple's generative AI models.

More info: https://support.apple.com/en-us/119829

Applebot-Extended

A crawler specifically used to identify and collect web content for training Apple's generative AI models, including Apple Intelligence. This is separate from regular Applebot and can be blocked independently.

More info: https://support.apple.com/en-us/119829

TikTok

Bytespider

A crawler that collects web content for AI model training, including for Doubao, their ChatGPT-style assistant.

No official public documentation available.

Common Crawl

CCbot

A crawler that systematically archives the open web. Its massive dataset is publicly available and widely used for AI training, academic research, and data analysis.

More info: https://commoncrawl.org/ccbot

Perplexity AI

PerplexityBot

A crawler that indexes pages so Perplexity can surface and link to them in its answer citations. According to the company, it is not used to train foundation models.

More info: https://docs.perplexity.ai/guides/bots

Perplexity-User

A user agent that fetches individual pages on‑demand when a Perplexity user’s query requires direct access.

More info: https://docs.perplexity.ai/guides/bots

Meta

Meta-ExternalAgent

A crawler to harvest public web content to train Meta's generative‑AI systems (e.g., Llama, Meta AI).

More info: https://developers.facebook.com/docs/sharing/webmasters/web-crawlers/

Meta-ExternalFetcher

An AI assistant that performs user-initiated fetches of individual links from Meta AI assistant product functions. It makes targeted, on-demand requests to retrieve current information that supplements training data.

More info: https://developers.facebook.com/docs/sharing/webmasters/crawler

FacebookBot

A crawler used by Facebook to index web content and fetch metadata such as Open Graph tags for generating rich link previews. It also collects training data for Meta's AI models.

More info: https://developers.facebook.com/docs/sharing/webmasters/crawler

Google-Extended

A crawler that controls whether Bard, Gemini, and other Google generative‑AI products may use your content.

More info: https://support.google.com/webmasters/answer/2723646#google-extended

Cohere

cohere-ai

An AI agent dispatched by Cohere's AI chat products in response to user prompts when it needs to retrieve content from the internet.

More info: https://darkvisitors.com/agents/cohere-ai

cohere-training-data-crawler

A crawler operated by Cohere to download training data for its large language models that power enterprise AI products.

More info: https://darkvisitors.com/agents/cohere-training-data-crawler

You.com

YouBot

A crawler used by You.com to index search results that allow their AI Assistant to answer user questions. The assistant's answers typically contain references to the website as inline sources.

More info: https://darkvisitors.com/agents/youbot

DuckDuckGo

DuckAssistBot

An AI assistant that crawls pages in real-time for DuckDuckGo's AI-assisted answers, which prominently cite their sources. This data is not used to train AI models.

More info: https://duckduckgo.com/duckduckgo-help-pages/results/duckassistbot

How to manage these Bots in robots.txt

Allow all bots

User-agent: * Disallow:

Block a bot completely

User-agent: <bot> Disallow: /Allow only specific folders

User-agent: <bot> Allow: /public/ Disallow: /

Remember that user‑initiated agents such as Claude-User and Perplexity-User may ignore robots.txt; use rate limiting or IP blocking if needed.